JOSEPH TAME’S 8th ANNUAL

LIVE-STREAMED TOKYO MARATHON

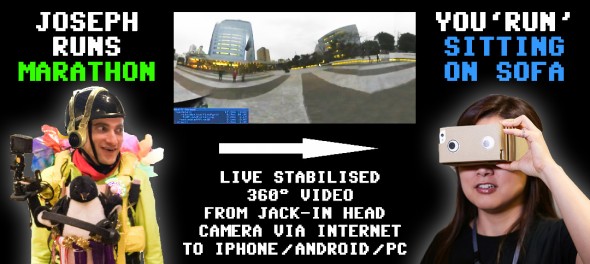

The world’s first sports event broadcast

in full 360-VR from the athlete’s head

History was made on February 28th 2016

Eight years in the making:

Tokyo Marathon 2016 sees my 360 degree dream become a reality.

Right from the start of this livestream experiment, that is, back in February 2009 when I first ran the marathon with an iPhone strapped to my head, there were two pieces of technology that I longed to have access to:

1) Stabilisation software or hardware

2) A 360 degree camera

When you are carrying out a mobile broadcast, stabilisation is key. Without it, your audience is liable to become nauseous within a couple of kilometres, making the stream unwatchable for extended periods. Over the years I have used all sorts of methods to provide a more stable stream, from wearable harnesses clamped tightly to my torso, to built-in camera stabilisation. Nothing has really been able to deal with the range of movements that running produces.

And then the 360 aspect: a marathon is a true 360 degree experience. It’s not just the road immediately in front of you; it’s the 1 million+ members of the public lining the course to your sides, it’s the runners in crazy costumes behind you, it’s the weather above you.

An early prototype of my 2016 costume

Multiple cameras helps to a certain extent, but it’s still limited to where you are, the cameraman, decide to point those cameras.

But back in 2009, these technologies didn’t really exist – at least not outside of professional film studios, and certainly not in a portable format.

Up until 2016, this was the closest I’d got to a 360 camera!

Fast forward to late 2015, and I’m at the Sony Computer Science Laboratories open day. There, I see a demo by a researcher, Shunichi Kasahara, who’s spent two years working on a wearable 360 degree camera system that’s paired with a VR headset to allow other people around you to experience ‘being you’ in real time.

In his own words, Kasahara’s focus is on exploring interactive systems for the transmission of real-time first-person experience, the storage and sharing of total experiences, and humans augmenting humans. His purpose is to trace the contours of the relationships between humanity and technology, and between human beings themselves, as they become redefined by the “human as medium” phenomenon.

Jack-in Head not only provided a real time stitched 360 video feed from the cameras on the other person’s head – it stabilised / locked the orientation to match your (the viewer’s) own orientation, meaning that even if the person wearing the headset turned their head around, you would see a consistent stable image that only turned when you turned your own head.

I gave it a go – it was pretty mind-blowing. I really felt like I was in the position of the person standing next to me.

Fundamentally this was exactly the kind of system I needed for the Tokyo marathon …minus the big computer and all the cables.

It was a couple of months later (in fact just 6 weeks before the marathon) when I contacted Kasahara-san again with a proposal that we collaborate for the race. I was grateful that he accepted this somewhat crazy challenge, which would require him to somehow shrink his system down to turn it into a portable solution.

Visiting Kasahara-san’s lab

It was touch and go as to whether this could be done in time: the software needed to be ported to Windows so it could run on a tiny battery-powered Intel NUC.

Not only that, but we needed to find a 360 streaming platform that could take the feed from my head and convert it into something that could be viewed on smartphones and web browsers.

Here, the good people behind the Panoplaza platform stepped up to the challenge, and rapidly developed a web viewer to complement the mobile apps they had previously developed.

The final piece in the jigsaw was the broadcast device: how could we actually send a data-heady stream of the Windows PC’s mirrored screen wirelessly to Panoplaza’s server? That’s where LiveU came in to the picture. LiveU, specialists in mobile live streaming technology (as used by thousands of mainstream broadcasters worldwide), had a new model out, the LU200, that was ultra-portable, and offered plug-and-play streaming of any signal input through HDMI or SDI.

The final piece in the jigsaw was the broadcast device: how could we actually send a data-heady stream of the Windows PC’s mirrored screen wirelessly to Panoplaza’s server? That’s where LiveU came in to the picture. LiveU, specialists in mobile live streaming technology (as used by thousands of mainstream broadcasters worldwide), had a new model out, the LU200, that was ultra-portable, and offered plug-and-play streaming of any signal input through HDMI or SDI.

The 360 output image (with embedded audio from the on-helmet mic) was sent to the receiving RTMP server via LiveU in standard 16×9 1080p. There it was re-scaled and streamed as a 360 image to online viewers who were watching either using Google Cardboard (kindly provided by Hacosco) or on a Chrome web browser.

This whole setup was powered by a couple of batteries originally designed for jump-starting cars!

The 360 degree camera kit

Then we come to the second camera and it’s super-omg-amazing stabilisation.

Over the past few years, with the increasing popularity of GoPro & other sports cameras, we’ve seen big developments in lightweight steadicam technology. The problem with non-powered solutions though is that whilst they’re good at canceling out the shake of your hand, they still require you to put considerable effort into holding the camera still and pointing it in the right direction. When running, that’s just not an option.

Likewise with the optical image stabilisation you have in your iPhone 6s Plus, Sony Handicam, or DSLR lens – all very well for minor shakes, but no match for big up and down arm movements.

Until last year, there was no alternative. Powered steady gimbals were only made for larger cameras. But then smaller powered gimbals came onto the market, and for the first time it was possible to get a nice smooth shot, regardless of whether you were walking, cycling, or running.

I’ve been a fan of the US based camera equipment manufacturer ikan for about 7 years now – it was their lightweight but tough shoulder rigs that I used as the base frame for the first generation ‘iRun’ mobile broadcasting machine.

I’ve been a fan of the US based camera equipment manufacturer ikan for about 7 years now – it was their lightweight but tough shoulder rigs that I used as the base frame for the first generation ‘iRun’ mobile broadcasting machine.

When looking for a powered gimbal, I trawled through a lot of reviews covering several popular manufacturers, and asked fellow video folks what their experience had been like. Overall, it was clear that the technology was still young, with a lot of reports of these things freaking out and going into spasms. The other issue was battery life – the DJI Osmo seemed to perform particularly badly.

This was one of the deciding factors that led me to choose the ikan FLY-X3-GO: it uses off-the-shelf batteries, meaning you don’t have to spend a fortune on custom batteries that may not perform as expected.

Another feature I liked was the ability to detach the controller and put the gimbal head on the end of a monopod, or suction cup on your car windshield etc, and still control it via an extension cord.

With the FLY-X3-GO not available in Japan, I reached out to ikan and was delighted by their generous offer to provide me with a unit for the marathon.

…and that’s how this year my Periscope stream stayed as solid as a running rock. I must admit I was pretty taken aback by just how steady it was, and I continue to be amazed by how it absorbs movement (airplane landings are particularly impressive). Highly recommended!

When it came to the actual race, as I suspected, the costume regulations were even tighter than in previous years (boooooring!!) I was told to remove my pinwheel hat and iron frame that I had stayed up half the night making. All they allowed me in with was my Gopro and gimbal …which was all I needed to get my live stream going.

Later on in the race I donned my Jack-in Head helmet, streaming live in 360 for the most interesting sections of the course. A world first!

This year I also had the support of the video production and project planning team at Breaker Inc. Dan was generous with his time, helping me produce a series of videos in the lead up to and following the big day, resulting in much better documenting of the process than in previous years.

The support extended to the course on race day: friends Nami & Phil, John Daub and Jonathan all kept me stocked with fuel for body and devices. Simon and Martina came along to challenge me to eat natto (to say I am not a fan of natto is an understatement) and a Pocari Sweat vs. Aquarius taste test (I guessed right!). The Ugawa Pies were also there, shooting some scenes for inclusion in this video profile made for Coto Academy. Kasahara-san and his colleague Kei provided tech support for the 360 camera, whilst my extended family were there at various points along the course to cheer me on.

We were also joined by thousands of fans online, with messages of support sent in via Facebook, Twitter and Periscope. The crowds along the course were also a delight as always – it truly is the day that Tokyo unites!

My time, as usual, was very slow – something like 6 hours 20 minutes. This is what happens when you live stream your run! earlier that month I had run the entire course twice in training, both times completing it in about 3 hours 45 minutes.

So, having achieved my dream of live streaming the marathon in 360, where can I take it next? To be honest, due to the ever-tightening regulations on what you can and can’t take in to the course, I’m considering not live-streaming it next year, but rather just running it, and enjoying interacting with people along the course without any worries about batteries. I might have to sneak a pinwheel in though… We’ll see!

Thanks to everyone who helped make this dream of mine come true. It was a true team effort!

Learn more about the Jack-in Head project (by Sony CSL & Rekimoto Lab) here.